RO-MAN 2023

High-Speed, High-Quality Robotic Portrait Drawing System

This work treats robotic portrait drawing as a systems problem: how to produce recognizable physical portraits quickly while preserving enough quality to make the result compelling.

Shady Nasrat, Taewoong Kang, Jinwoo Park, Joonyoung Kim, Seung-Joon Yi

Table of contents

INTRODUCTION

Robotic portrait drawing has been an area of interest for researchers, as it presents an exciting challenge in both the technical and creative realms. And with the widespread adoption of collaborative robots, there have been a growing number of robotic portrait drawing systems utilizing them recently. However, drawing a high-quality, detailed portrait can be a lengthy task that takes a very large number of drawing strokes. Thus, many of these approaches have struggled to achieve a balance between the quality of the output drawing and the efficiency of the drawing process, and have chosen either one of two goals. Some approaches take a long time, sometimes measured in hours, for a highly detailed drawing, and some approaches greatly simplify the facial features so that the drawing process can be completed in a reasonably short time.

In this paper, we introduce a real-time autonomous drawing system that leverages recent machine learning techniques to achieve both the goals. Our system uses human keypoint detection algorithm to detect and crop the dominant human face from video feed, and then uses the Cycle Generative Adversarial Network (CycleGAN) algorithm to style transfer the image into a black-and-white sketch image. From the sketch image, we use line extraction and path optimization algorithms to generate optimized waypoints for the physical tracing of a robotic arm. Finally, a 6-DOF robotic arm with a Chinese calligraphy pen is used to quickly draw the portrait, where the pen pressure is dynamically adjusted to vary the width of the strokes.

To test the effectiveness of our system, we have conducted extensive real-world experiments using various volunteer groups. The results demonstrate that our system is able to produce high-quality portrait drawings with an average drawing time of 80 seconds while conserving most of the facial details intact. The system has been also demonstrated to general public at the RoboWorld 2022 exhibition, where the system has drawn portraits of more than 40 visitors with a satisfaction rate of 95 %.

The rest of the paper proceed as follows. Section reviews previous approaches of robotic portrait drawing system in two broad categories and presents the relative advantages of the proposed system over them. Section provides a detailed information of each of the four modules that consists of the proposed system. Section presents the experimental results, which includes a public demonstration at the RoboWorld 2022 exhibition. Finally, we conclude with the future direction of this work in Section .

RELATED WORK

Robotic portrait drawing has long been a challenging task due to the difficulties in preserving fine details and achieving short drawing times. In an effort to overcome these challenges, previous methods have utilized a variety of drawing tools, including paint brushes Artistic?, Robotic?, Painting?, painted?, artwork?, Painting?, Robot?, and Cartoon?, which often struggle to capture fine details and are time-consuming. For instance, Painting?, Robot? employed paint brush techniques to create highly detailed portraits, but this required a large number of strokes, resulting in lengthy drawing times; a single portrait required the drawing of 9000 strokes over a period of 17 hours.

Other methods that utilize pens or pencils, such as Stylized?, Paul?, Humanoid?, RoboCoDraw?, and closed-loop?, also have the disadvantage of being time-consuming or failing to preserve facial details in the drawings. Over the past few years, researchers have applied various machine learning techniques in an effort to improve the efficiency of robotic portrait drawing. For instance, Gao et al. in Vivid? used GAN-based style transfer to reduce the number of strokes required for drawing sketches, resulting in a shorter drawing time. However, this approach resulted in the simplification of portrait drawings. Similarly, Tianying et al. in RoboCoDraw? used GAN-based style transfer to transform a target face image into a simplified cartoon character, which reduced the average drawing time to 43.2 seconds. While these techniques may be efficient, they often sacrifice the preservation of facial details in the drawing.

To address these challenges, our proposed system utilizes advanced machine learning techniques and a path optimization algorithm to generate detailed sketches in an efficient manner. The algorithm minimizes unnecessary movements and reduces drawing time by guiding the robotic arm to the next line start point with optimal precision. Table presents a comparison of previous robotic portrait systems.

METHOD

The proposed system is comprised of four main modules. the Portrait Generation Module is responsible for accurately capturing the necessary images for the project. Its ability to extract high-quality RGB portrait images from the camera ensures that the subsequent steps of the process can be performed with the high level of accuracy. the Sketch Generation Module, utilizing the CycleGAN, creates the desired sketches from the RGB portrait images. The Drawing Motion Generation module is responsible for taking these sketch images and converting them into traceable navigational waypoints, which are then followed by the Robot Motion Control Module on the physical canvas. The smooth and precise execution of this process is essential for the overall success of the project. The related work for each module will be discussed in separate sections.

Portrait Generation Module

The use of the OpenPose algorithm for cropping human faces as portraits, or regions of interest (ROI), from camera frames has been a valuable addition to our module due to its accuracy and efficiency. The algorithm is based on a convolutional neural network (CNN) that is trained to identify and locate key points on a person’s body, such as the shoulders, elbows, wrists, and ankles. By detecting these key points, the algorithm is able to accurately crop the person’s face from the frame, even in cases where the face is partially occluded or the person is at an unusual angle. The fact that it can be used in real-time applications makes it an ideal choice for this purpose.

We also utilize the OpenPose algorithm to extract the position of the eyes from the input portrait image. The extracted information regarding the eye position is subsequently utilized in the path optimization module, which generates the optimal path for the robotic arm to follow when executing the drawing of the eyes.

Sketch Generation Module

In our proposed system, we utilized the CycleGAN Unpaired? to learn a mapping between the domain of real faces (\(X_{real}\)) and the domain of sketch-style avatars (\(Y\)). This module is designed to preserve the consistency of important facial features, such as haircuts, face shapes, and eye shapes, while learning the mapping between the two domains. The CycleGAN allows us to achieve this mapping without the need for any labeled data or supervision, making it a highly effective and efficient tool for generating portrait sketches.

The structure of the CycleGAN is shown in Fig.. Training real faces are denoted as \(X_{real}\), training generated faces are denoted as \(X_{fake}\) and the generated sketches are denoted as \(Y\). Relying on a deep network \(G_{XY}\) generator to convert face image \(X_{real}\) to sketch image \(Y\). We reverse the process using another deep network \(G_{YX}\) to reconstruct the sketch image \(Y\) to face image \(X_{fake}\). Then we use a mean square error \(MSE\) to control the training of \(G_{XY}\) and \(G_{YX}\).

The drawing discriminator \(D_{D}\) discriminates generated portrait line drawings from real ones, Enforcing the existence of important facial features in the generated drawing, besides a discriminator \(D\) that analyzes the full drawing, we add three local discriminators \(D_{ln}\), \(D_{le}\), \(D_{ll}\) to focus on discriminating the nose drawing, eye drawing and lip drawing respectively. The inputs to these local discriminators are masked drawings, where masks are obtained from a face parsing network. \(D_{D}\) consists of \(D\), \(D_{ln}\), \(D_{le}\), \(D_{ll}\).

Drawing Motion Generation Module

In this module, our focus is to extract the minimum number of lines while maintaining all facial features. The extracted lines are then converted into waypoint data for the robot motion module. Our goal is to accurately and efficiently generate a portrait sketch through the use of advanced machine learning techniques and optimized line extraction.

|

|

|

|

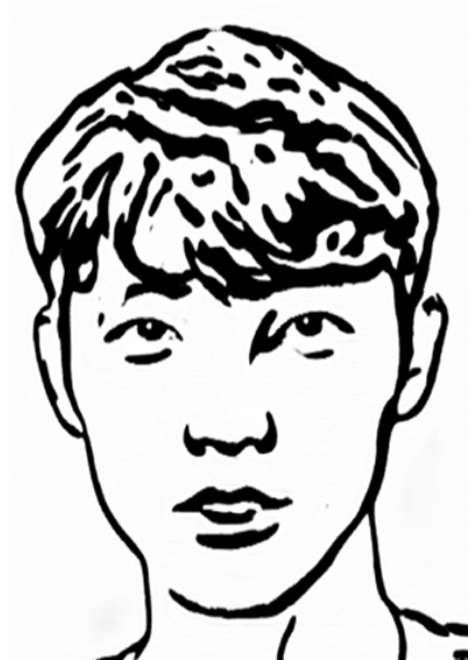

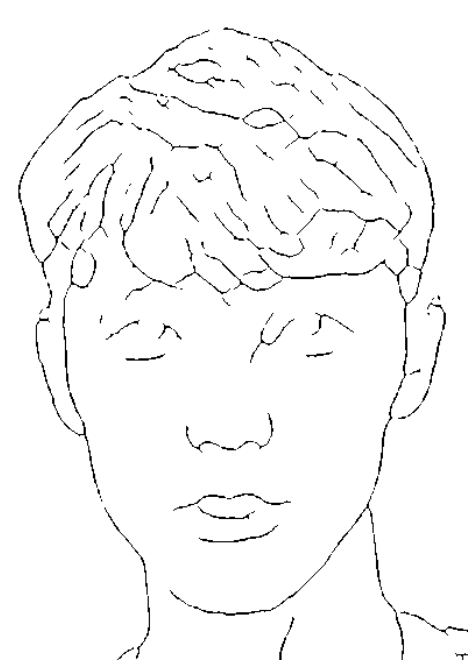

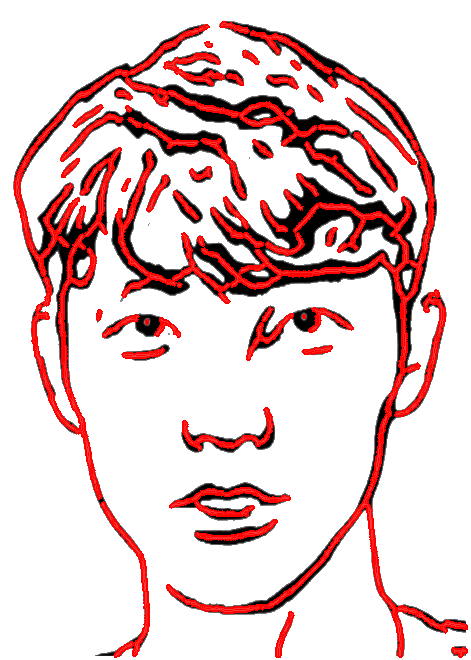

| (a) | (b) | (c) | (d) |

Skeleton extraction

As the first step of the drawing motion generation, we first employ morphological transformation as a preprocessing step prior to extracting motion waypoints, simplifying a sketch into a structure of lines that facilitates further processing. Two morphological transform algorithms have been used for skeletonization: the opening algorithm, which performs erosion followed by dilation to smooth pixel edges and remove isolated pixels, and the closing algorithm, which performs dilation followed by erosion to fill small holes. The opening algorithm was primarily used to extract most of the sketch lines, while the closing algorithm was utilized mainly for the eyes sketches to provide better details and improve the overall accuracy of the system.

Lines Extraction

In order to extract lines from the sketch image, we applied the pixel-to-pixel algorithm. This involves searching for the nearest pixel to the current pixel and adding it to a line array if it is connected. If it is not connected, it is saved to a new line array. This method allows for the efficient and accurate extraction of lines from the sketch, which can then be used for further processing in the system. By utilizing the pixel-to-pixel algorithm, we are able to extract important details and features of the sketch, such as facial features, while minimizing the number of lines and optimizing the processing time. This is an essential step in the generation of a high-quality portrait sketch.

Lines Clustering And Waypoints Generation

In this proposed system, we have implemented a line clustering algorithm that categorizes lines based on their spatial proximity to one another with the aim of minimizing the number of lines that need to be drawn by the robotic arm. By recognizing clusters of lines that are situated in close proximity, we are able to merge them into a single line. This approach resulted in a reduction of the average number of lines drawn to 49%, leading to more efficient and faster production of high-quality drawings. As an illustration, the lowest line count in Fig. was reduced to 45 lines, resulting in a drawing time of 75 seconds.

Once the lines have been clustered, we use a waypoint generation algorithm to extract the necessary information from the sketch image. We have set the waypoint generation algorithm buffer to 250 waypoints per line, which allows us to maintain a high level of precision while also optimizing the drawing process. The waypoints generated by this algorithm not only contain information about the path that the arm should follow, but also hold information about the thickness of the lines being drawn.

After the waypoints have been generated, they are passed to the robot motion control module, which uses them to guide the arm along the desired path. This allows the arm to smoothly trace the lines in the sketch and produce high-quality drawings in a timely manner.

Eye Handling

To further improve the accuracy and natural appearance of the eye sketch, we implemented an approach that involved separating the eye sketch from the rest of the image and processing them separately. Specifically, we used the Opening algorithm for the eye sketch and the Closing algorithm for the rest of the image. This approach allowed us to accurately capture the details of the eye without smoothing out or filling in any features, as was the case with the Closing algorithm in the first version. As a result, the final sketch had a more natural appearance and was able to accurately preserve the details of the eye.

Robot Motion Control Module

We implemented a path optimization algorithm and a communication system, using ROS, to improve the efficiency and precision of the robotic arm’s portrait drawing process. The path optimization algorithm calculates the most efficient path for the arm to reach the next starting point, minimizing unnecessary movements and reducing drawing time. To ensure that the robotic arm had enough information to accurately draw a single line, we set the waypoint navigational buffer to 250 points, as determined by the previously discussed waypoint generation algorithm. The communication system, using ROS, allows for smooth and precise control of the robotic arm during the drawing process. Together, these techniques resulted in smooth and efficient drawing process, producing high-quality portrait sketches in a timely manner.

EXPERIMENTAL RESULTS

Lab experiments

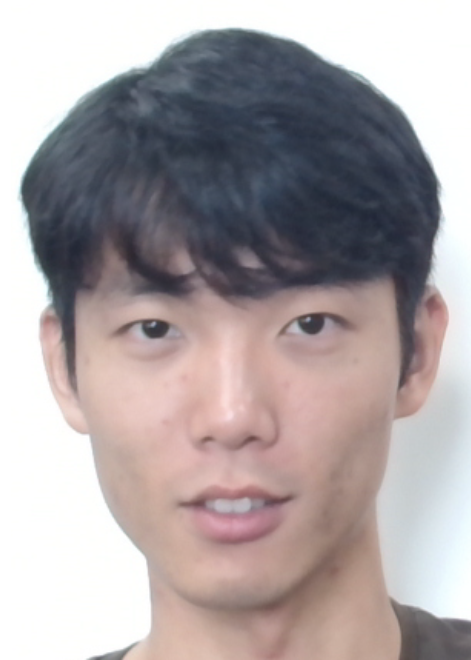

Our system was evaluated through a laboratory experiment conducted with a cohort of students, who were instructed to generate portraits using the system. The experimental results demonstrate that the system is capable of producing highly detailed and visually pleasing sketches.

Open demonstration

Our system was fully tested and demonstrated in November 2022 at the RoboWorld exhibition, where it drew portraits of more than 40 volunteers as shown in Fig. The system received a high satisfaction rate of 95% among the participants, indicating its effectiveness and reliability in producing high-quality portrait sketches in a time-efficient manner. As a result of its outstanding performance, our system was honored with the Korean Intellectual Property Office Award at the competition. The success of our system at the RoboWorld exhibition demonstrates its potential for practical application in a variety of settings, including galleries, studios, and educational institutions.

CONCLUSION

In conclusion, the proposed system represents a significant advancement in the field of robotic portrait drawing. By combining machine learning techniques such as CycleGAN with morphological transformations and path optimization. The proposed system demonstrates the capability to generate visually appealing and accurate sketches in a rapid and efficient manner. Our real-world experiments at the RoboWorld exhibition have demonstrated the effectiveness, stability, robustness, and flexibility of our approach, with an average drawing time of 80 seconds per portrait, receiving a high satisfaction rate of 95% among the participants.

*This project was funded by Police-Lab 2.0 Program(www.kipot.or.kr) funded by the Ministry of Science and ICT(MSIT, Korea) & Korean National Police Agency(KNPA, Korea) (No. 082021D48000000) and Korea Institute for Advancement of Technology(KIAT) grant funded by the Korea Government(MOTIE)(P0008473, HRD Program for Industrial Innovation)↩︎

Authors are with Faculty of Electrical Engineering, Pusan National University, Busan, South Korea

seungjoon.yi@ pusan.ac.kr↩︎